What Most Think, Why It’s Wrong, & What Cancer Centers Really Need

Originally posted on The Cancer Letter February 27, 2026

Originally posted on The Cancer Letter February 27, 2026

Executive Summary:

Radiology departments have tried for years to “own” or “fix” clinical trials imaging assessment workflows by extending clinical tools (PACS, worklists, dictation systems) and habits (speed, seamlessness, few clicks) into the research world. The intent is great: protect radiologists’ time, reduce friction, and avoid burnout. But the result is predictable: fragmented processes, delayed reads, protocol errors, endless follow-up emails, and costly rework for study teams.

Cancer centers need to stop pretending that a homegrown system with an acronym, or a bolted-on point solution, integrated to the radiology PACS, equals a “system.” What’s required is a purpose-built, end-to-end clinical trials imaging platform that lives on the web, connects investigators, coordinators, and readers, and enforces trial-specific workflows, measurements, and data structures—without forcing everything through radiology’s clinical pipeline. [1,2,6,7]

How We Got Here:

Applying Clinical Paradigms to Research Realities

- Protocol-specific response criteria that can be modified and vary for each trial (RECIST, RANO, Lugano, irRECIST, etc.) [3,4,5]

- Defined timing windows, target lesions, longitudinal comparisons, and change thresholds

- Auditable source data requirements and investigator workflows [2,10]

- Data handoffs to sponsors, CROs, and central review organizations

- Heavy documentation and query resolution cycles, often months after the read

Trying to bend research clinical trials into a clinical PACS mold has created more friction and frustration for everyone, especially outside radiology.

What Radiology Usually Thinks Is Needed—And Why It Doesn’t Solve the Core Problems

"Never Leave the Seat”

What they think: If we integrate our PACS and EHR to the research system, we’ll be fine.

Why it’s wrong: Integration shaves seconds, not hours. Most NCI-designated centers often do <10 research reads/day, and yet the unmanageable workflow makes this a highly disruptive process. Saving 10 seconds per read by “fewer clicks” is meaningless when each read takes 30 minutes due to manual PACS reading processes that trigger 30 more minutes of follow-ups per read to resolve issues. These occur because PACS can’t:

- Track patients’ response assessments longitudinally across cycles of therapy

- Pre-fetch the correct comparison source imaging data to be ready to read

- Provide worklists to direct reads to trained readers on a trial-by-trial basis

- Display and guide compliance with protocol criteria or align with past measurements

- Perform response calculations

- Drive traceable communication and collaboration directly with coordinators and investigators

“Fewer Mouse Clicks = Better”

- Isn’t guided to the right slice/lesion from the last time point

- Has to transpose measurements into the cancer center's source files manually

- Responds to endless clarification emails because nothing is connected

Some radiology departments purchase separate tumor measurement point solution tools, but are still left with no cancer center workflows, no site-specific investigator workflows, no web access, and no protocol flexibility. Ultimately, those systems create another silo, driving more manual re-entry and more confusion.

“One-and-Done Reports”

Why it’s wrong: A clinical read and a clinical trial research read are not the same report. They can (and often do) differ legitimately. Trial protocols define progression differently from clinical practice. Doing both simultaneously doesn’t improve productivity, and mixing them increases error rates.

- A small, trained set of radiologists (internal or external) performs research reads part-time or in reading cores.

- Clinical radiologists stay focused on clinical throughput.

- Research data stays protocol-compliant, traceable, and separate, without compromising patient care.

The Myth of “We Already Have a System”

Observed reality:

Bottom Line:

What’s Really Needed—A Purpose-Built, End-to-End Clinical Trials Imaging Platform

Purpose-built for clinical trial imaging is defined by a few key characteristics:

1. Protocol-Centric Workflows that- Encode flexible, modifiable criteria (RECIST, RANO, etc.) and visit schedules up front.

- Enforce correct, protocol-compliant lesion selection, measurements, and calculations—no guesswork.

2.Reader Guidance & Traceability to- Auto-navigate readers to the correct images and prior targets.

- Capture every measurement and decision point with timestamps and users.

3. Protocol-Centric Workflows that- Coordinators, investigators, radiologists, and QA staff to work in one place.

- No “radiology-only” silos. Sponsors/CROs can receive endpoints immediately.

4. De-Identification & Secure Sharing to- Automate compliant de-identification and streamline internal or external reads.

- Eliminate CD-ROMs, ad hoc file-sharing, and emailing PHI.

5. Concurrency & Audit Support to- Log every action. Queries can be resolved with context, not guesswork.

- Simplify re-reviews, adjudications, and reader switching when required.

6.Data Delivery & Analytics so- Results flow downstream automatically; no manual transposition.

- Dashboards display status, turnaround times, and bottlenecks in real time.

Stop forcing clinical trial imaging research through clinical pipes. Seek out the best workflow for the research pipeline, and every stakeholder wins.

- Encode flexible, modifiable criteria (RECIST, RANO, etc.) and visit schedules up front.

- Enforce correct, protocol-compliant lesion selection, measurements, and calculations—no guesswork.

- Auto-navigate readers to the correct images and prior targets.

- Capture every measurement and decision point with timestamps and users.

- Coordinators, investigators, radiologists, and QA staff to work in one place.

- No “radiology-only” silos. Sponsors/CROs can receive endpoints immediately.

- Automate compliant de-identification and streamline internal or external reads.

- Eliminate CD-ROMs, ad hoc file-sharing, and emailing PHI.

- Log every action. Queries can be resolved with context, not guesswork.

- Simplify re-reviews, adjudications, and reader switching when required.

- Results flow downstream automatically; no manual transposition.

- Dashboards display status, turnaround times, and bottlenecks in real time.

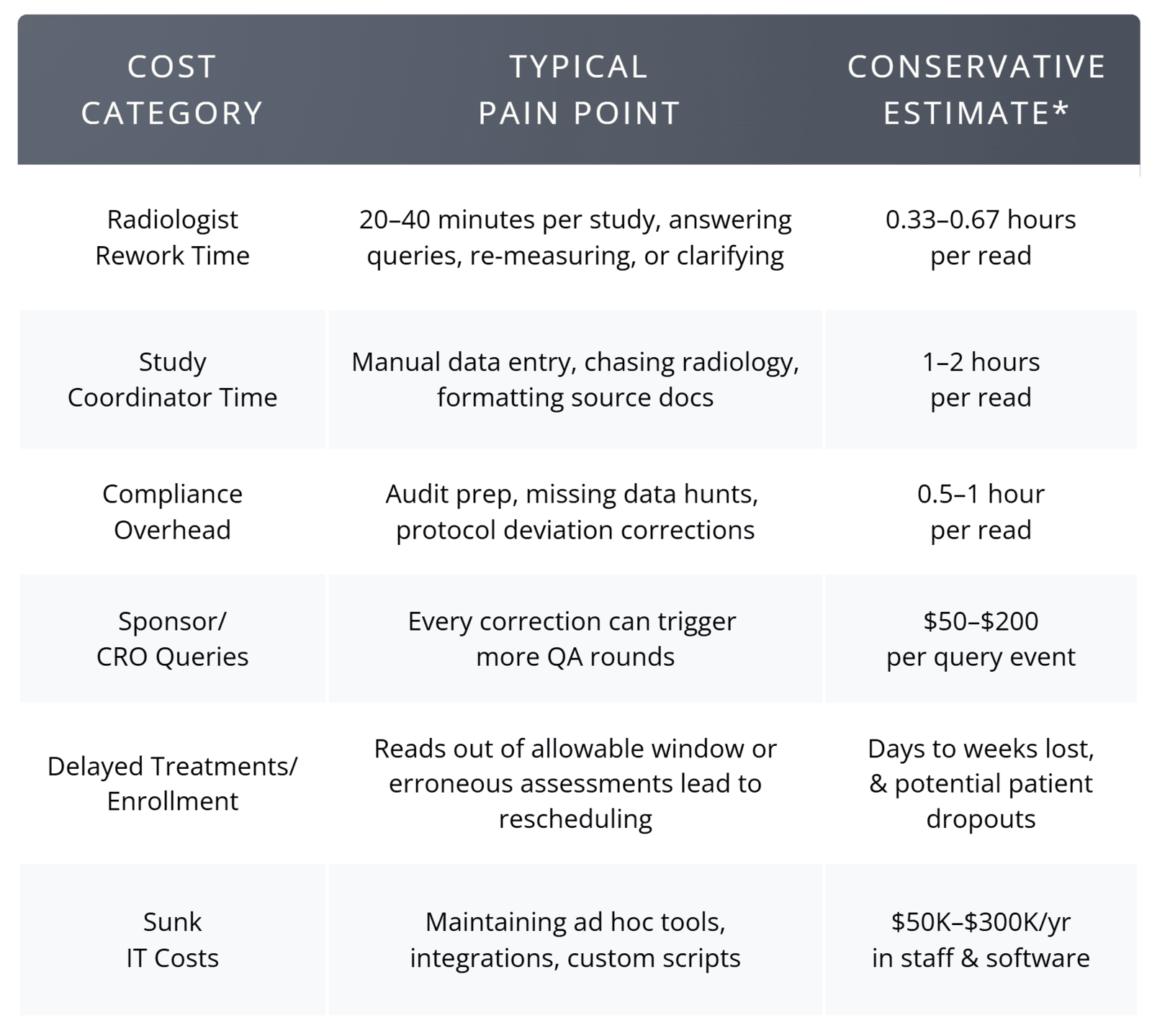

Cost & Impact Summary Direct Costs of the Current Approach

The Organizational Impact of Poor Workflow

The Upside of Doing It Right

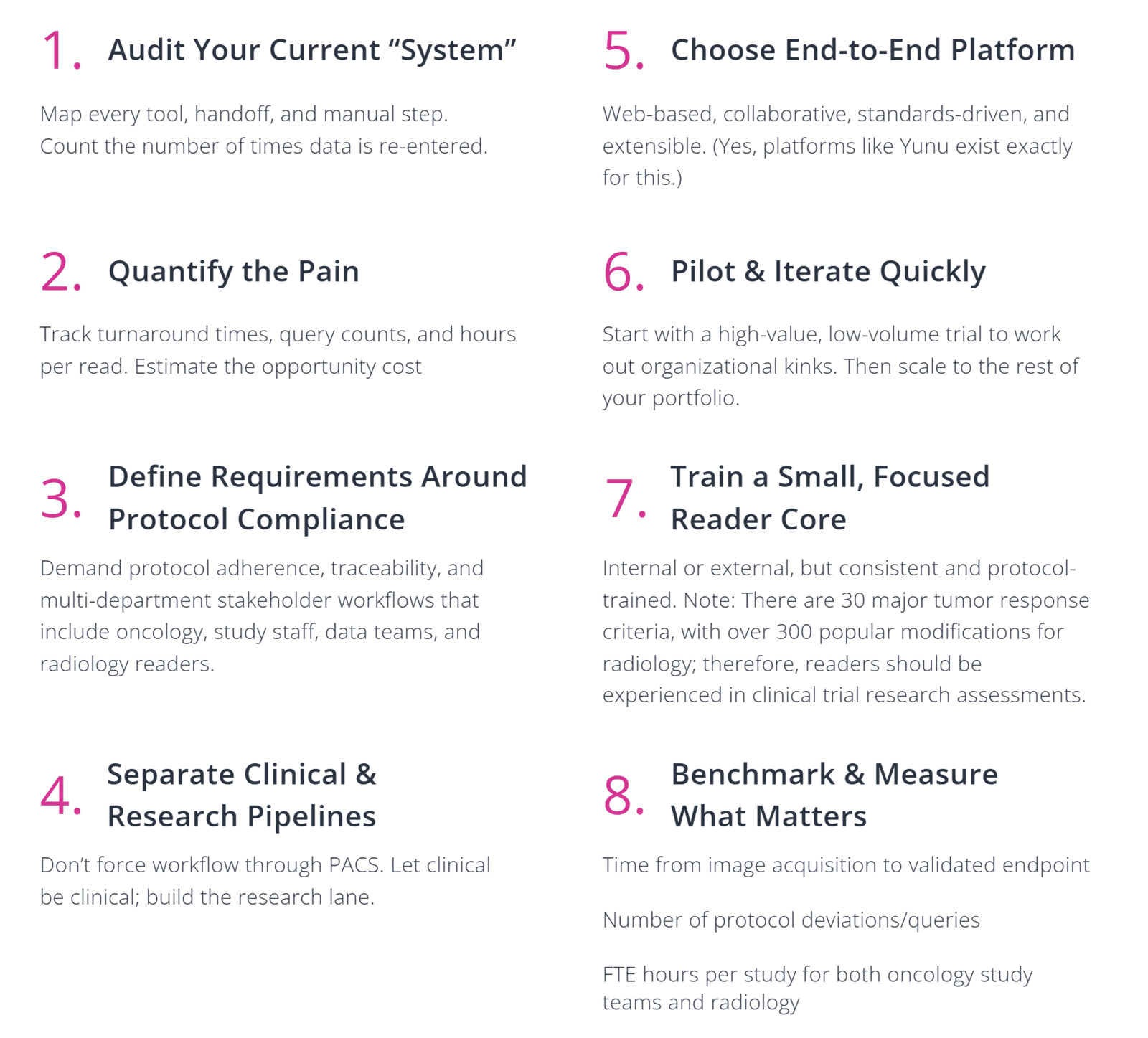

Your Next Steps (Practical Checklist)

Final Thought

Cancer centers are judged by their ability to deliver accurate and timely clinical trial imaging data that advances trials and benefits both patients and sponsors. Radiology’s clinical efficiency mindset does not translate to research success. It’s time to stop pretending it does. Select a system designed for clinical trials imaging, connect the stakeholders who actually use the data, and measure success by the speed and quality of your endpoints, not by how many clicks a radiologist made.

The ambition to “transform” research imaging through clinical tools failed. The good news? The fix is clear, implementable, and already in use at over a quarter of all NCI-designated cancer centers that have refused to settle for less. In fact, you can check out Yunu’s full end-to-end platform in less time than it took to read this article. Ready to find a better way?

Request A Personalized Demo of Yunu

What can I expect?

- A brief discussion of your current workflow, needs, and goals.

- A live demo to review features and functionality with our clinical experts.

- Q&A on how the Yunu system can meet the needs of your organization.

References

1. Sorenson, J. (2025, July 18). Imaging’s ride to the bottom in clinical trials—and why it matters now. The Cancer Letter. https://cancerletter.com/sponsored-article/20250718_4/

2. Fotenos, A. (2018, September 20). Update on FDA approach to safety issue of gadolinium retention after administration of gadolinium-based contrast agents (Presentation by Anthony Fotenos, MD, PhD, Lead Medical Officer, Division of Medical Imaging Products). U.S. Food and Drug Administration. https://www.fda.gov/media/116492/download

3. Eisenhauer, E. A., Therasse, P., Bogaerts, J., Schwartz, L. H., Sargent, D., Ford, R., Dancey, J., Arbuck, S., Gwyther, S., Mooney, M., Rubinstein, L., Shankar, L., Dodd, L., Kaplan, R., Lacombe, D., & Verweij, J. (2009). New response evaluation criteria in solid tumours: Revised RECIST guideline (version 1.1). European Journal of Cancer, 45(2), 228–247. https://doi.org/10.1016/j.ejca.2008.10.026

4. Wen, P. Y., Macdonald, D. R., Reardon, D. A., Cloughesy, T. F., Sorensen, A. G., Galanis, E., DeGroot, J., Wick, W., Gilbert, M. R., Lassman, A. B., Tsien, C., Mikkelsen, T., Wong, E. T., Chamberlain, M. C., Stupp, R., Lamborn, K. R., Vogelbaum, M. A., van den Bent, M. J., & Chang, S. M. (2010). Updated response assessment criteria for high-grade gliomas: Response Assessment in Neuro-Oncology Working Group. Journal of Clinical Oncology, 28(11), 1963–1972. https://doi.org/10.1200/JCO.2009.26.3541

5. Cheson, B. D., Fisher, R. I., Barrington, S. F., Cavalli, F., Schwartz, L. H., Zucca, E., & Lister, T. A. (2014). Recommendations for initial evaluation, staging, and response assessment of Hodgkin and non-Hodgkin lymphoma: The Lugano classification. Journal of Clinical Oncology, 32(27), 3059–3067. https://doi.org/10.1200/JCO.2013.54.8800

6. Herzog, T. J., Wahab, S. A., Mirza, M. R., Pothuri, B., Vergote, I., Graybill, W. S., Malinowska, I. A., York, W., Hurteau, J. A., Gupta, D., González-Martin, A., & Monk, B. J. (2024). Concordance between investigator and blinded independent central review in gynecologic cancer clinical trials. International Journal of Gynecological Cancer. Advance online publication. https://www.international-journal-of-gynecological-cancer.com/article/S1048-891X(24)01777-8/fulltext

7. Yunu. (2024). 50% Imaging Error Rate in Clinical Trials Discussed by Expert Panelists from 5 NCI-Designated Cancer Centers. Retrieved from https://www.yunu.io/blogs/post/50-imaging-error-rate-in-clinical-trials-discussed-by-expert-panelists-from-5-nci-designated-cancers

8. Hicks, L. (2022, February 18). Disrespect from colleagues is a major cause of burnout, radiologists say. Medscape. https://www.medscape.com/viewarticle/968776?form=fpf

9. Rula, E. Y. (2024, July 3). Radiology workforce shortage and growing demand: Something has to give. American College of Radiology. https://www.acr.org/Clinical-Resources/Publications-and-Research/ACR-Bulletin/Radiology-Workforce-Shortage-and-Growing-Demand-Something-Has-to-Give

10. International Council for Harmonisation Expert Working Group. (2025). ICH harmonised guideline: Guideline for good clinical practice E6(R3). https://database.ich.org/sites/default/files/ICH_E6%28R3%29_Step4_FinalGuideline_2025_0106.pdf